Nvidia will be disrupted from below, not from above

Nvidia disruption will not happen the way you think. Actually, it will come from below.

Please before diving in, read this disclaimer.

Nvidia Is Not Going to Be Defeated the Way You Think

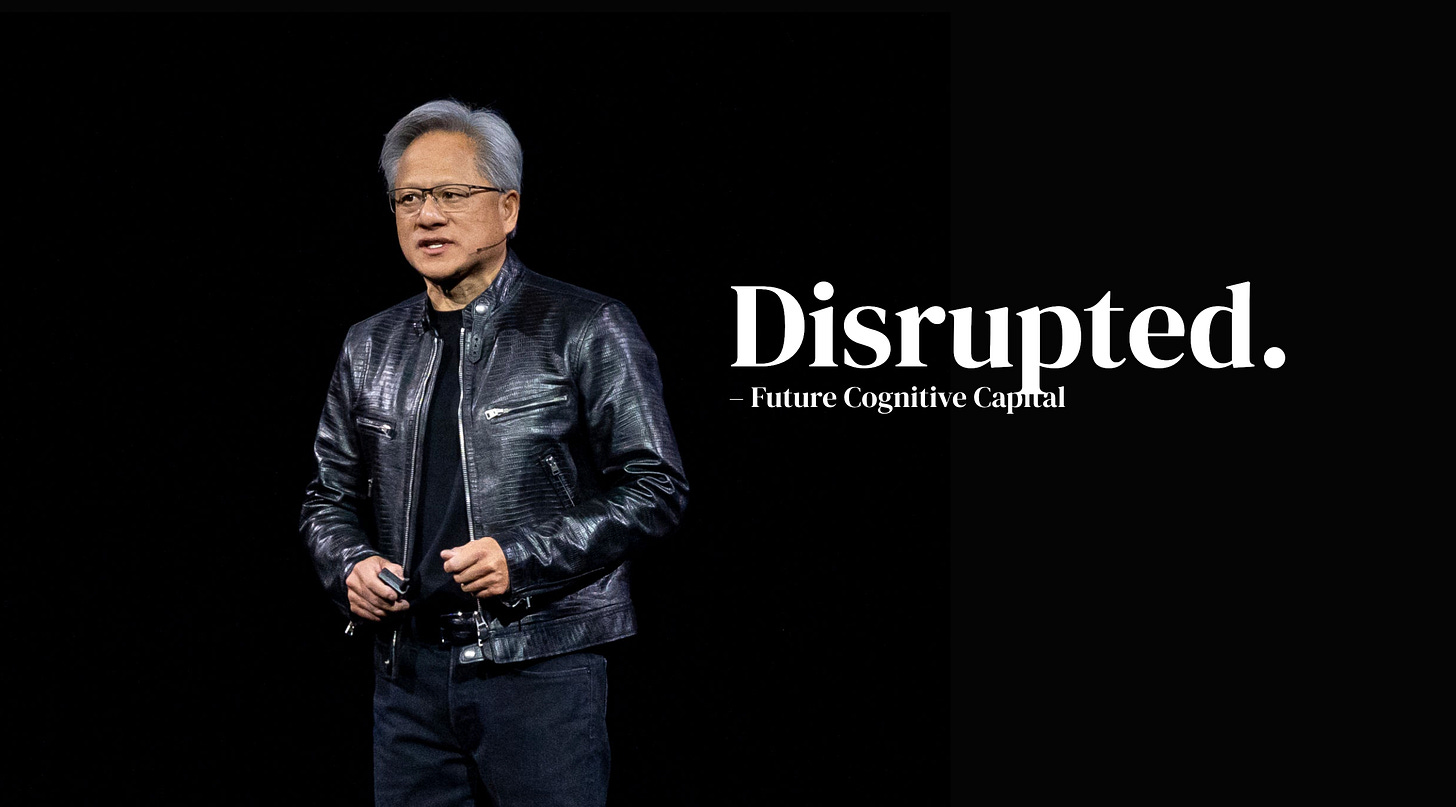

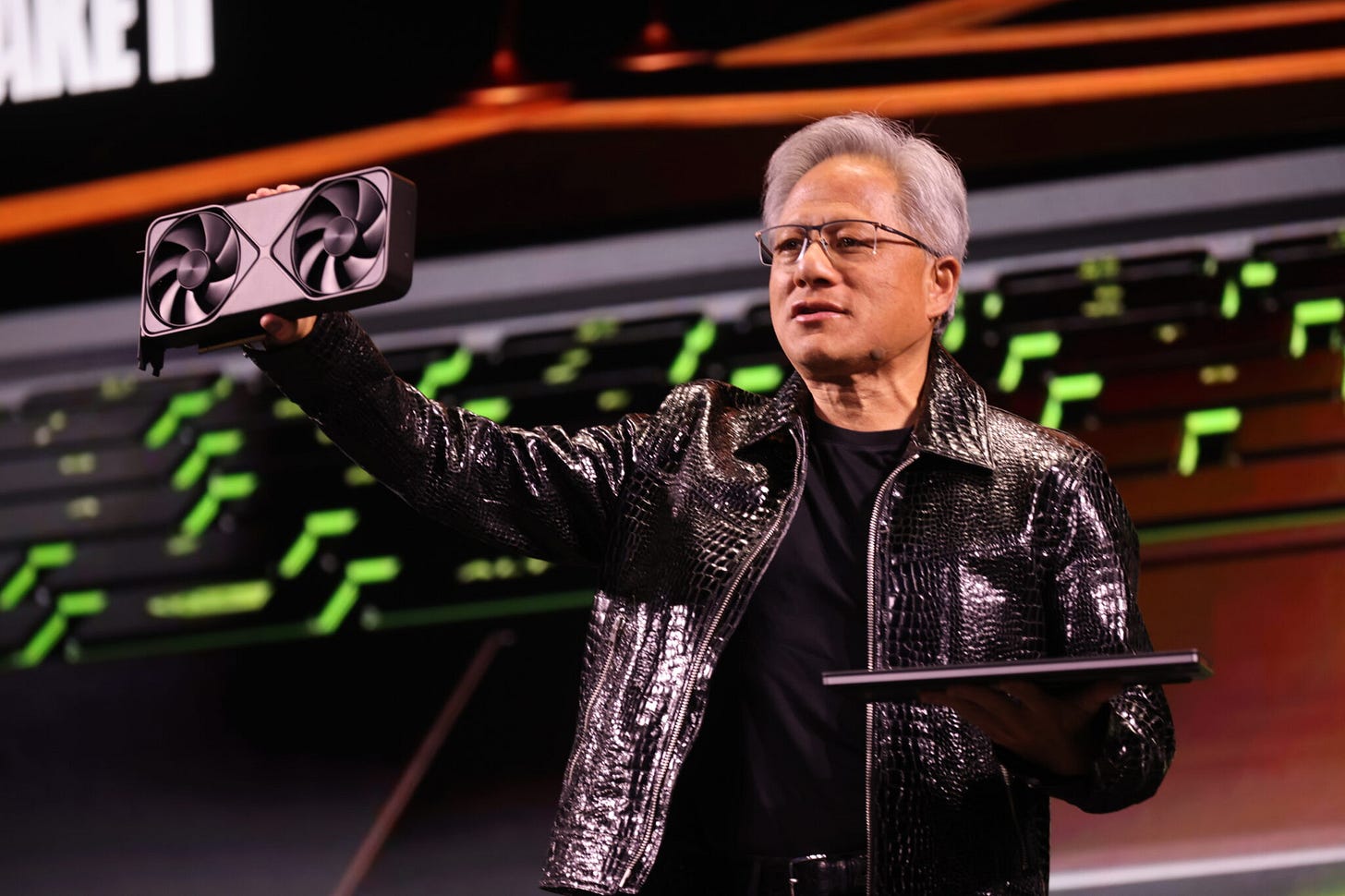

Jensen Huang walks on stage in a leather jacket and the crowd goes insane. Another GPU announcement, another generation of chips that makes the previous one look like a pocket calculator. H100, H200, B100, B200. The numbers go up and goes the stock. The narrative stays the same: Nvidia owns AI, full stop. Period.

And honestly? That narrative isn’t wrong. Not yet.

Nvidia has something most companies spend decades trying to build and never do: a software moat that makes the hardware almost irrelevant. CUDA has been around since 2006. Nineteen years of researcher muscle memory, of PhD students learning to code on it, of production pipelines rebuilt around it. Switching away from CUDA doesn’t mean buying a different chip. It means retraining your entire engineering org, rewriting your stack, and accepting a painful transition period where your competitors aren’t slowing down. That’s a real gravity well.

So when people say Nvidia is vulnerable, they usually mean one of two things.

Either AMD catches up on raw performance, which has been “about to happen” for six years now. Or a hyperscaler custom chip (Google’s TPUs, Amazon’s Trainium, Microsoft’s Maia) eventually pulls enough workload in-house to dent demand. Both are real risks, neither is the one that keeps us up at night.

Here’s what does.

The entire Nvidia thesis is built on one assumption that almost nobody questions out loud: that AI compute demand will keep looking roughly like it does today. Massive training runs, thousands of H100s burning through gradient descent for weeks.

If that’s the future, Nvidia wins because the moat holds, CUDA stays sticky and the margins stay obscene.

But there’s a shift happening that doesn’t show up cleanly in the earnings calls yet. The center of gravity in AI is moving, slowly, then probably fast, from training to inference. From building the model to running it and those are not the same market.

Not even close.

Training is a sport for giants. Ten, maybe twenty organizations in the world are running the kind of frontier training jobs that require Nvidia’s best iron. It’s a concentrated, capital-intensive, performance-at-any-cost market.

and Nvidia was built for exactly this.

However, inference is something else entirely. It’s thousands of companies, millions of API calls per day, latency constraints, cost-per-token spreadsheets, procurement committees. It’s a market where “good enough, fast, and cheap” destroys “perfect and expensive” every single time. It’s the market where disruption by the low end is not a theory, and it’s the playbook.

And then DeepSeek happened.

In January 2025, a Chinese lab released a model that matched GPT-4 class performance at a reported training cost that made the AI industry do a collective double-take. The immediate reaction was about geopolitics and IP.

The more important signal was for us buried underneath: if frontier-level capability can be reached with dramatically less compute, the entire demand curve for training clusters gets repriced. Not eliminated. Repriced. Which is enough.

Clayton Christensen described this dynamic in 1997 and the tech industry has been re-learning it ever since. The dominant player gets disrupted not by a better version of themselves, but by something cheaper that serves a market they weren’t even watching. The disruption climbs…

… and by the time it’s visible at the top, it’s too late.

The threat to Nvidia isn’t a better GPU, absolutely not, they are the leader. It might be the gradual end of needing one….

You got it?

So what actually happens to Nvidia’s moat when inference-first becomes the default? Which of its competitive dimensions survive the transition, and which ones quietly erode? And most importantly, what should you buy? That’s what we figure out below.